⚡ FLASH SALE: Get 60% OFF All Premium 3D & STL Models! ⚡

In the vast and intricate world of real-time rendering and interactive experiences, visuals often take center stage. High-fidelity 3D models, stunning environments, and cutting-edge rendering techniques like those found in Unreal Engine are undeniably crucial. However, the true magic of immersion often lies in an element frequently overlooked: sound. For automotive visualization, game development, and interactive configurators—where the roar of an engine or the subtle click of a virtual button can elevate an experience from good to unforgettable—a meticulously crafted audio system is paramount. Imagine showcasing a breathtaking 3D car model, perhaps sourced from 88cars3d.com, only to have its visual splendor undermined by flat, unconvincing sound. It’s a disconnect that can instantly break immersion.

Unreal Engine offers a robust and highly flexible audio system, empowering developers and artists to sculpt rich, spatial soundscapes that complement their visual masterpieces. This comprehensive guide will delve into the intricacies of Unreal Engine’s audio capabilities, focusing on spatial sound design, advanced mixing techniques, and performance optimization. We’ll explore how to harness features like attenuation, submixes, and MetaSounds to create truly dynamic and realistic audio experiences, especially tailored for projects featuring high-quality 3D car models. By the end of this article, you’ll have a deeper understanding of how to transform your Unreal Engine projects from merely looking good to sounding spectacular, providing a complete sensory journey for your audience.

At the heart of Unreal Engine’s audio system lies a powerful and modular architecture designed for flexibility and scalability. Understanding this foundation is crucial for any developer aiming to create compelling sound experiences, particularly in automotive visualization where the nuances of engine sounds, interior acoustics, and environmental ambience play a significant role. The primary building blocks are Sound Waves, Sound Cues, Sound Classes, and Submixes, each serving a distinct purpose in shaping the final audio output.

Sound Waves are the raw audio files (WAV, OGG, FLAC) imported into Unreal Engine. These are the fundamental sound assets that represent everything from an engine idle loop to a tire screech or a UI click. While simple, they are the source of all auditory information. Once imported, they can be configured with various properties like compression settings, sample rates, and streaming behavior to optimize performance and memory usage, a critical consideration for large projects featuring numerous high-resolution car models and their associated sounds.

Sound Cues act as containers and processors for Sound Waves. Think of them as mini-blueprints for audio. They allow you to combine, manipulate, and spatialize multiple Sound Waves, apply randomization, sequencing, and even basic effects. For instance, a single Sound Cue could blend multiple engine sound layers (idle, low RPM, high RPM) based on a vehicle’s speed parameter, or randomly play different door-closing sounds for variety. This node-based editor provides a visual workflow for complex sound design without writing any code. For a car’s engine, a Sound Cue might combine a base engine loop, a rev sound, and even a distant exhaust rumble, all controlled by a single parameter.

Sound Classes provide a hierarchical system for organizing and controlling groups of sounds. They allow you to apply global volume, pitch, and attenuation settings to categories of sounds, such as “Engine Sounds,” “UI Sounds,” “Environmental Ambience,” or “Music.” This inheritance system means you can create a master “Automotive Sounds” class, and then child classes like “Sports Car Engine” or “Electric Car Whine” will inherit its base properties but can also override them. This makes managing complex audio mixes incredibly efficient, especially when dealing with multiple car models, each with distinct sound profiles. For more details on managing your audio assets, refer to the official Unreal Engine documentation on Sound Cues and Sound Classes.

Effective management of your sound assets begins with a well-organized folder structure within the Unreal Editor. Just as you would categorize your 3D car models (e.g., /Vehicles/SportsCar/Materials, /Vehicles/SportsCar/Meshes), your audio should follow a similar logic (e.g., /Audio/Vehicles/EngineSounds, /Audio/UI, /Audio/Environment). When importing Sound Waves, pay close attention to their properties. For transient sounds like gear shifts or button clicks, a higher sample rate and uncompressed format might be acceptable, but for long looping engine sounds or ambience, efficient compression (like ADPCM or Opus) and potentially lower sample rates can significantly reduce memory footprint without noticeable quality loss, especially important for VR or mobile automotive experiences.

A_Engine_Idle_SportsCar01, SC_UI_ButtonPress) to quickly identify assets.Sound Classes are indispensable for creating a scalable and manageable audio mix. By establishing a robust Sound Class hierarchy, you gain granular control over various audio elements. For instance, you can define a root “Master” class, with child classes for “Music,” “SFX,” and “Dialogue.” Under “SFX,” you might have “VehicleSFX,” which then branches into “EngineSFX,” “TireSFX,” and “InteractionSFX.” This allows you to adjust the volume of all engine sounds globally, or specifically target the volume of only sports car engines, without modifying individual Sound Cues. Crucially, Sound Classes can be exposed to Blueprint, enabling runtime control by users (e.g., volume sliders in an options menu) or by game logic (e.g., lowering music volume during an important cinematic featuring a car). This systematic approach ensures that even as your project grows with more detailed car models and complex interactions, your audio mix remains coherent and easily adjustable.

Spatial audio is the cornerstone of immersive sound design, allowing listeners to perceive the direction and distance of sound sources within a 3D environment. For automotive visualization and games, this means accurately positioning engine roars, tire squeals, and even the subtle creaks of a car interior within the virtual space. Unreal Engine provides a comprehensive set of tools to achieve this, making your high-quality car models from 88cars3d.com not just look real, but also sound authentic and spatially convincing.

The primary mechanism for spatialization is Attenuation Settings. These define how a sound’s volume and other properties change based on its distance from the listener. Every sound in Unreal Engine can have an Attenuation Settings Asset assigned to it, or it can use a default. Within this asset, you configure several key parameters: inner and outer radius, falloff curve, spatialization method, and low-pass filtering. The inner radius defines the distance where the sound plays at full volume, while the outer radius dictates where it becomes completely inaudible. The falloff curve determines how the volume decreases between these two radii, allowing for linear, logarithmic, or custom curves. For a realistic engine sound, you’d want a significant falloff, where the engine is loud when close but quickly fades into the distance.

Unreal Engine also offers various Spatialization Methods, including Panning (simple left/right stereo), Binaural (HRTF-based for headphones, providing a more convincing 3D effect), and Ambisonics (for multi-channel spatial audio). For standard applications, Binaural is often preferred for its strong sense of directionality, especially in VR automotive experiences. Additionally, Attenuation Settings can apply a low-pass filter as the sound travels further, simulating the absorption of high frequencies by air and environmental elements, making distant sounds sound muffled and more realistic. This is vital for differentiating a nearby car’s engine from one further down the road. Understanding and meticulously configuring these settings can transform a flat soundscape into a dynamic, believable auditory world that truly brings your automotive scenes to life.

To implement effective spatial audio, you’ll work primarily with Attenuation Settings assets. Create a new asset (Right-click in Content Browser > Sounds > Sound Attenuation) and assign it to your Sound Cues. Here’s a breakdown of crucial properties:

It’s good practice to create different Attenuation Settings presets for various sound types: one for general SFX, one for player character sounds, and perhaps specific ones for vehicle engines (with larger radii) and UI sounds (often non-spatialized or with minimal attenuation).

Beyond simple distance attenuation, Unreal Engine offers features to simulate sound occlusion and environmental effects, further enhancing realism. Sound Occlusion allows sounds to be muffled or blocked when obstacles (like walls or other cars) are between the sound source and the listener. This is handled by a combination of the Attenuation Settings (enabling ‘Enable Occlusion’) and Unreal Engine’s physics system. When a Line Trace (raycast) from the listener to the sound source is blocked by an object, the sound can be attenuated, low-pass filtered, or even completely cut off. For an automotive scenario, this means an engine sound behind a building will naturally sound different than one in open air.

Reverb Volumes and Sound Globals are used to simulate environmental acoustics like echoes in a tunnel or a large hangar. You place a Reverb Volume in your level, and any sound sources within its bounds will automatically have reverb applied according to the volume’s settings. For car models, this is invaluable for creating believable interior acoustics or making an engine roar sound appropriate whether it’s in a vast outdoor environment or an enclosed parking garage. Combining spatialization with occlusion and reverb creates a truly dynamic and believable audio experience, crucial for the highly immersive environments often built around premium 3D assets from platforms like 88cars3d.com.

A great soundscape is more than just a collection of spatially accurate sounds; it’s a meticulously blended symphony where each element finds its place without overpowering or being lost. This is where advanced mixing techniques using Unreal Engine’s Submixes and effect processing come into play. A well-mixed project ensures clarity, dynamic range, and overall audio coherence, which is essential for professional automotive visualizations and interactive applications.

Submixes are powerful audio routing and processing tools. They allow you to group multiple Sound Cues or Sound Classes and route their output through a shared processing chain. Imagine you have dozens of different engine sounds, tire squeals, and car-related UI sounds. Instead of applying reverb individually to each Sound Cue, you can route all “Automotive SFX” through a dedicated Submix. On this Submix, you can then apply a single Reverb effect that affects all sounds routed through it. This not only saves processing power by avoiding redundant effects but also ensures consistency in your environmental reverb and overall mix. Submixes can also be chained together, allowing for complex routing: for example, all engine sounds go to an “Engine Submix” for compression, then that output goes to a “Vehicle Master Submix” which then goes to the “Master Output Submix.” This hierarchical structure provides incredible control over your audio signal flow.

Unreal Engine also provides a suite of built-in Audio Effects that can be applied directly to Submixes. These include common processors like Equalizers (EQ), Compressors, Reverbs, Delays, and Chorus effects. For automotive audio, EQ is invaluable for shaping the tonal characteristics of an engine sound, removing muddy frequencies, or enhancing punch. Compression can be used to control the dynamic range of an engine, making sure it doesn’t clip at high RPMs but also doesn’t get lost at idle. Reverb is crucial for simulating environmental acoustics, from the subtle reflections inside a car cabin to the expansive echo of an underground garage. By strategically applying these effects, you can sculpt a professional-grade audio mix that perfectly complements the visual fidelity of your 3D car models.

Designing a robust Submix architecture starts with identifying logical groupings of sounds. A typical setup might include:

Each Sound Class can specify which Submix it outputs to. This granular control allows you to apply unique effects chains to different categories of sounds. For instance, you could apply a subtle low-cut EQ and a very short, tight reverb to the “Interior SFX Submix” to simulate the enclosed space of a car cabin, while the “Engine Submix” might get a multi-band compressor and a different reverb profile for external sound. This modularity means you can adjust the overall loudness of all engine sounds, for example, without affecting tire squeals, or apply a global effect to all SFX while keeping music untouched.

Effective use of Equalization (EQ) and Compression can significantly enhance the perceived quality and realism of your automotive audio. For engine sounds, EQ is your primary tool for shaping tone. Use a parametric EQ effect on your “Engine Submix” to:

Compression helps manage the dynamic range. An engine sound can vary greatly in volume from idle to full throttle. Applying a compressor with a gentle ratio (e.g., 2:1 or 3:1) and appropriate threshold on the “Engine Submix” can help control these peaks and troughs, making the sound more consistent and preventing clipping. A slow attack and release time can allow the transient punch of a rev to come through while still taming overall volume. For UI sounds, a limiter on the “UI SFX Submix” might be useful to ensure button clicks never exceed a certain loudness. Unreal Engine’s built-in effects are robust, but for complex sound design, you might consider third-party plugins integrated through platforms like Fabric or Wwise, if your project demands that level of advanced functionality. You can find more detailed information on Unreal Engine’s audio effects in the official documentation.

Static soundscapes, no matter how well-mixed or spatially accurate, can only go so far. The true power of Unreal Engine’s audio system shines when sounds become interactive and reactive, driven by gameplay events, user input, and dynamic parameters. This is where Blueprint visual scripting and the groundbreaking MetaSounds system become indispensable tools, especially for creating immersive automotive experiences with highly interactive 3D car models.

Blueprint visual scripting offers a powerful and accessible way to trigger, modify, and control audio in response to virtually any event within your Unreal Engine project. Instead of writing C++ code, you can visually connect nodes to define complex logic. For automotive applications, this opens up a wealth of possibilities: playing an engine start sound when a car’s ignition is turned on, varying engine RPM sounds based on throttle input, playing tire screech sounds when sliding, or triggering specific UI sounds in an interactive car configurator when a user selects a paint color or wheel type. You can use Blueprint to dynamically change volume, pitch, spatialization settings, or even swap out entire Sound Cues based on factors like vehicle speed, damage state, or proximity to other objects. This allows for an incredibly responsive and believable audio experience that dynamically adapts to the user’s actions and the environment.

Stepping beyond the traditional Sound Cues, Unreal Engine 5 introduced MetaSounds – a revolutionary high-performance audio system for generating and modifying sound procedurally. Unlike Sound Cues which primarily manipulate pre-recorded audio, MetaSounds allows you to synthesize sounds from scratch, process live audio, and create highly complex, dynamic, and reactive audio assets entirely within Unreal Engine. Imagine designing an engine sound where every component – the cylinder firing, the exhaust rumble, the turbo whine – is a procedural waveform, all modulated in real-time by vehicle parameters like RPM, load, and gear. This offers unparalleled flexibility and realism, enabling sounds that are never perfectly identical, mimicking the subtle variations of real-world phenomena. MetaSounds are perfect for creating adaptive music, dynamic environmental ambience, and exceptionally realistic and customizable vehicle sounds that evolve with the gameplay or interaction.

Implementing interactive audio with Blueprint involves a few key nodes:

Play Sound at Location is crucial for spatial audio, requiring a Sound Wave or Sound Cue and a Transform (location and rotation).For a car, you might have a Blueprint on the vehicle that takes the current engine RPM (a float variable) and passes it to an “Engine_MetaSound” via a “Set Float Parameter” node on every tick. The MetaSound then uses this RPM value to procedurally generate and modulate the engine sound in real-time. Similarly, a car configurator might have Blueprint logic that plays a unique “material change” sound effect when the user cycles through different paint finishes, or a satisfying click when a new wheel rim, perhaps a highly detailed model from 88cars3d.com, is selected.

MetaSounds represent a paradigm shift in Unreal Engine audio. Instead of merely playing back recorded samples, you’re building instruments and effects graphs. For automotive audio, this is revolutionary:

MetaSounds utilize a node-based graph editor similar to Blueprints or Material Editor. You connect various nodes like Wave Players, Oscillators, Envelopes, Filters, Delays, and Mixers to construct complex audio logic. Exposing input parameters allows Blueprint to control the MetaSound’s behavior dynamically. This deep integration makes MetaSounds an incredibly powerful tool for creating next-generation, responsive, and truly immersive audio experiences for your automotive projects. To dive deeper, the Unreal Engine documentation on MetaSounds is an excellent resource.

While an elaborate audio system can elevate immersion, it’s crucial to balance realism with performance. Poorly optimized audio can lead to significant CPU overhead, increased memory usage, and even hitches, especially in performance-sensitive applications like VR automotive configurators or high-fidelity driving simulations. Implementing best practices and focusing on optimization is as critical for audio as it is for the visual rendering of complex 3D car models. A performant audio system ensures a smooth, uninterrupted experience for the user, allowing them to fully appreciate the detail of both the sound and the visual assets from platforms like 88cars3d.com.

One of the primary concerns for audio performance is voice count – the number of simultaneous active sounds. Every active sound consumes CPU cycles for processing (spatialization, attenuation, effects) and memory for its raw data. For a racing game, you might have multiple cars, each with an engine, tire, and impact sounds, plus environmental ambience, music, and UI feedback. Managing this can quickly become complex. Unreal Engine’s Sound Concurrency system is designed to address this. It allows you to define rules for how sounds in a specific group should behave when too many try to play at once. You can set limits on the number of active sounds in a group, define prioritization (e.g., player’s engine sound over AI car engines), or specify how sounds should be “stolen” (e.g., stopping the quietest sound to make room for a new, louder one). This is indispensable for preventing your audio engine from becoming overloaded.

Beyond voice count, efficient management of audio assets themselves is vital. This includes using appropriate compression settings and sample rates for your Sound Waves. While uncompressed WAV files offer the highest quality, they consume significant memory. For most in-game sounds, especially longer loops like engine hums or ambience, lossy compression (like Ogg Vorbis or even ADPCM) can dramatically reduce file size and memory footprint with minimal perceived quality loss. Similarly, using a lower sample rate (e.g., 22 kHz instead of 44.1 kHz) for distant or less critical sounds can save resources. Streaming audio for very long tracks (like background music) or large ambience loops ensures they don’t consume memory upfront. By thoughtfully optimizing these aspects, you can maintain a rich audio experience without sacrificing overall project performance, which is paramount for real-time applications.

To ensure your automotive audio runs smoothly:

Right-click in Content Browser > Sounds > Sound Concurrency) and apply them to relevant Sound Cues/MetaSounds. Define limits (e.g., max 8 engine sounds at once), prioritization (player’s car always takes precedence), and resolution rules (Stop Oldest, Stop Quietest). This is critical for preventing audio overload in scenes with multiple vehicles.Remember that audio optimization is an ongoing process. Regularly test your project on target hardware and use profiling tools to identify bottlenecks.

Unreal Engine provides robust tools for debugging and profiling audio, which are essential for identifying performance issues and ensuring your mix is behaving as intended. The primary tool is the Audio Mixer Debugger (accessible via Window > Developer Tools > Audio Mixer Debugger). This panel offers a real-time view of:

Additionally, the Stat Audio command (typed into the console) provides a summary of audio performance metrics directly in your viewport. For more in-depth analysis, the Unreal Insights profiler can capture detailed CPU and memory usage over time, allowing you to pinpoint specific audio threads or assets that are consuming excessive resources. Regular profiling, especially during development phases and on target hardware, will help you maintain a highly performant and polished audio experience for your automotive projects, ensuring that the sound complements the visual quality of your 3D car models without compromise. For further reading on performance, consult the official Unreal Engine Audio Debugging documentation.

As we’ve explored, crafting truly immersive experiences in Unreal Engine goes far beyond stunning visuals. While a meticulously detailed 3D car model from 88cars3d.com might capture the eye, it’s the sophisticated interplay of spatial sound and precise mixing that truly engages the senses and transports the user into the virtual world. From the foundational architecture of Sound Waves and Cues to the advanced capabilities of Submixes, Blueprint, and MetaSounds, Unreal Engine provides a comprehensive toolkit for audio designers to sculpt compelling sonic landscapes for any automotive project.

Mastering spatial audio with attenuation settings, leveraging hierarchical control with Sound Classes, and finessing your mix with Submixes and effects like EQ and compression are essential steps in creating a believable and dynamic auditory experience. Furthermore, integrating interactive audio through Blueprint and harnessing the procedural power of MetaSounds allows for unparalleled realism and responsiveness, making engine sounds evolve organically and environments react dynamically to user input. Crucially, optimizing your audio for performance—managing voice counts with concurrency and efficient asset management—ensures that these rich soundscapes run smoothly across various platforms, from high-end visualization setups to performance-sensitive VR applications.

The journey to a truly immersive automotive experience in Unreal Engine is a holistic one, where visual fidelity and auditory excellence march hand-in-hand. By applying the techniques and best practices outlined in this guide, you can elevate your projects from merely looking great to sounding absolutely spectacular, offering a complete sensory journey for your audience. So, next time you’re importing a high-quality car model, remember that the silence it brings is an opportunity – an opportunity to fill it with a rich, dynamic, and unforgettable soundscape that truly brings your virtual automotive world to life.

Texture: Yes

Material: Yes

Download the Buick Regal 3D Model featuring a classic American sedan design, detailed exterior, and optimized interior. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $10.79

Texture: Yes

Material: Yes

Download the Buick LaCrosse 3D Model featuring professional modeling and texture work. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $10.79

Texture: Yes

Material: Yes

Download the Bugatti Type 41 Napoleon 3D Model featuring its iconic luxury design, detailed exterior, and opulent interior. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $10.79

Texture: Yes

Material: Yes

Download the Bugatti Type 57SC Atlantic Coupe 3D Model featuring its iconic streamlined body and classic design. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $10.79

Texture: Yes

Material: Yes

Download the Bugatti 16C Galibier-006 3D Model featuring its luxurious sedan design, detailed interior, and sophisticated exterior. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $10.79

Texture: Yes

Material: Yes

Download the BMW Vision Connected Drive Concept 3D Model featuring its innovative design, advanced connectivity, and sleek aesthetics. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $19.79

Texture: Yes

Material: Yes

Download the BMW Motorsport M1 E26 1981 3D Model featuring its iconic design, race-bred aerodynamics, and meticulously crafted details. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $20.79

Meta Description:

Texture: Yes

Material: Yes

Download the Cadillac CTS-V Coupe 3D Model featuring detailed exterior styling and realistic interior structure. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, AR VR, and game development.

Price: $13.9

Texture: Yes

Material: Yes

Download the Cadillac Fleetwood Brougham 3D Model featuring its iconic classic luxury design and detailed exterior and interior. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $10.79

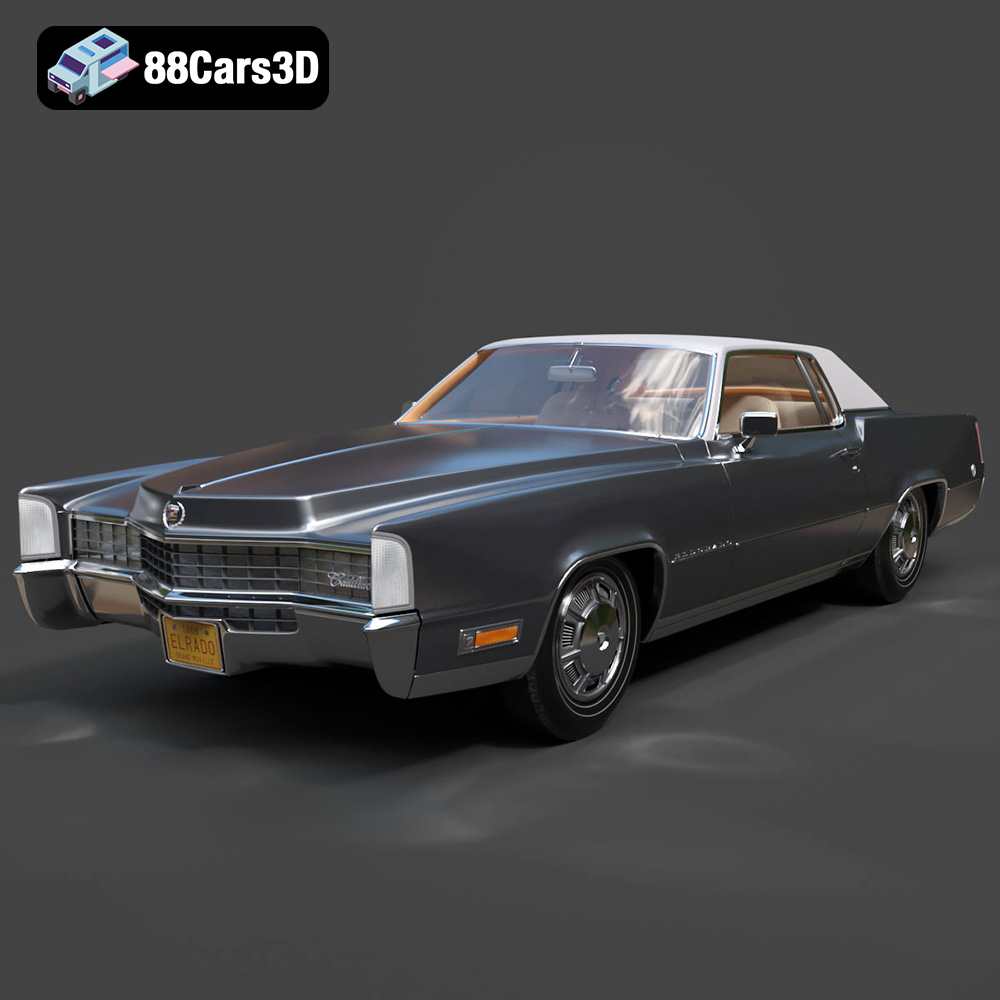

Texture: Yes

Material: Yes

Download the Cadillac Eldorado 1968 3D Model featuring its iconic elongated body, distinctive chrome accents, and luxurious interior. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $20.79