⚡ FLASH SALE: Get 60% OFF All Premium 3D & STL Models! ⚡

In the visually stunning world of real-time rendering and automotive visualization, an often-underestimated element plays a pivotal role in delivering true immersion: sound. While immaculate 3D car models, powered by technologies like Nanite and rendered with Lumen, capture the eye, it’s the rich, spatial audio that truly engages the senses and anchors the experience in reality. For professionals leveraging platforms like 88cars3d.com for high-fidelity vehicle assets, understanding and mastering Unreal Engine’s robust audio system is not just an advantage—it’s a necessity.

From the visceral roar of an engine as it accelerates past, to the subtle creak of suspension over uneven terrain, or the distinct ‘thunk’ of a car door closing, every auditory detail contributes to the perceived realism and quality of your project. This comprehensive guide will delve deep into Unreal Engine’s audio capabilities, focusing on spatial sound design, advanced mixing techniques, and performance optimization. We’ll explore how to transform static visuals into dynamic, believable environments, ensuring that your automotive projects, whether for games, configurators, or virtual production, not only look incredible but sound equally captivating. Prepare to unlock the full potential of sound to elevate your Unreal Engine automotive experiences.

Before you can craft intricate soundscapes, you need to bring your audio assets into Unreal Engine and organize them effectively. The quality and format of your source audio files are paramount. Unreal Engine supports a wide range of audio formats, with WAV files being the most common and recommended for their uncompressed quality, typically at 44.1 kHz or 48 kHz sample rates and 16-bit or 24-bit depth. While compressed formats like OGG can be used for smaller file sizes, WAV offers the best fidelity for critical sounds like engine noises or high-impact collisions.

Upon import, Unreal Engine creates a Sound Wave asset, which is the foundational building block for all audio playback. These assets allow you to configure various properties, including compression settings, streaming behavior, and whether they should be looped. For automotive projects, careful management of engine loops, tire squeals, and environmental sounds is crucial. Organizing your Sound Waves into logical folders (e.g., ‘Audio/EngineSounds’, ‘Audio/SFX’, ‘Audio/Environment’) from the outset will save significant time and effort as your project grows.

When importing audio, Unreal Engine offers several critical settings that directly impact performance and quality. In the Sound Wave asset editor, you can adjust:

For large projects, it’s beneficial to establish a clear naming convention for your audio assets. This not only aids organization but also streamlines asset lookup and management, making collaboration more efficient.

While traditional Sound Cues (node-based graphs for mixing and modifying Sound Waves) have been a staple in Unreal Engine, MetaSounds represent a paradigm shift in how dynamic and procedural audio is created. Introduced in Unreal Engine 5, MetaSounds are a high-performance, node-based audio authoring system that allows for unparalleled real-time sound synthesis, procedural generation, and parameter control directly within the engine. This makes them incredibly powerful for automotive audio, where sounds often need to react dynamically to numerous vehicle parameters.

Imagine creating an engine sound that isn’t just a simple loop but a complex, layered synthesis driven by the vehicle’s RPM, gear, load, and even throttle input. With MetaSounds, you can:

For example, a MetaSound for a car engine might involve multiple pitched engine loops blended based on RPM, a separate turbo spool sound crossfaded based on boost pressure, and dynamic exhaust pops controlled by throttle release. The transition between these states would be smooth and natural, creating a far more convincing and immersive sound than static Sound Cues could achieve. When sourcing automotive assets from marketplaces such as 88cars3d.com, consider how their visual detail can be perfectly complemented by the dynamic audio capabilities of MetaSounds.

The magic of spatial audio lies in its ability to convince the listener that sounds originate from specific points in a 3D space, interacting with the environment in a believable way. For automotive visualization, this means hearing a car approach from the left, its engine roar fading as it drives away, and its sound bouncing off nearby buildings. Unreal Engine provides sophisticated tools to achieve this level of realism, primarily through Attenuation Settings, Occlusion, and various Reverb and environmental effects.

Proper spatialization is crucial for grounding your high-fidelity 3D car models within the scene. A car that looks photo-realistic but has static, non-spatial audio will immediately break immersion. The goal is to make the sound source feel physically present in the world, allowing the player or viewer to intuitively understand its distance, direction, and interaction with physical obstacles.

Attenuation Settings define how a sound’s volume and other properties change as the listener’s distance from the sound source varies. Every audio component or sound cue can reference an Attenuation Settings asset, allowing for centralized control and reusability. Key parameters include:

When applying these settings, consider the real-world properties of the sound. A car horn will have a different falloff and spatialization profile than the subtle hum of its air conditioning. Experimenting with these curves is key to achieving a natural and believable soundscape. You can find detailed explanations of these parameters in the official Unreal Engine documentation.

Real-world sound doesn’t just fall off with distance; it’s also affected by obstacles and environments. Occlusion simulates the blocking of sound by geometry, making sounds muffled or quieter when an object is between the listener and the source. Unreal Engine offers two primary methods for occlusion:

For high-fidelity automotive scenes, especially those with intricate buildings or other vehicles, enabling ray-traced occlusion can significantly enhance realism, making sounds behave as they would in a physical space. You can configure occlusion parameters within the Attenuation Settings, controlling the amount of volume reduction and low-pass filtering applied to occluded sounds.

Reverb simulates the reflections of sound within an environment, giving a sense of space—whether it’s an open field, a tunnel, or a vast showroom. Unreal Engine offers several ways to add reverb:

By skillfully combining attenuation, occlusion, and environmental reverb, you can craft a truly immersive auditory experience that complements the visual fidelity of your 3D car models, making your virtual environments feel vibrant and alive.

A collection of great individual sounds is only half the battle. To create a cohesive and professional audio experience, meticulous mixing and mastering are essential. Unreal Engine provides a powerful hierarchical system of Sound Classes and flexible Submixes to give you granular control over your entire audio output. This allows you to balance different types of sounds, apply global effects, and ensure that no single element overpowers another, whether it’s the roar of a high-performance engine or the subtle click of a UI button in an automotive configurator.

Effective mixing is critical for maintaining clarity and impact. Without it, even the most beautifully designed sounds can become a muddled mess, detracting from the overall user experience. This is especially true in dynamic automotive scenes where multiple sounds—engine, tires, collisions, environment, music—can be playing simultaneously.

Sound Classes are hierarchical assets that allow you to group sounds and apply common properties and volume adjustments. Think of them as channels on a mixing board. For automotive projects, a well-defined Sound Class hierarchy might look like this:

Each Sound Wave asset is assigned to a specific Sound Class. By adjusting the volume of a parent Sound Class (e.g., ‘SFX’), you can proportionally control the volume of all its children (Engine, Tires, Impacts, UI, Environment). Furthermore, Sound Classes allow you to:

A well-organized Sound Class hierarchy ensures consistency, makes balancing sounds easier, and allows for dynamic changes to the entire soundscape through Blueprints or C++.

Submixes are the backbone of advanced mixing and mastering in Unreal Engine. They act as virtual audio buses, allowing you to route groups of sounds, apply effects, and control their final output. Every Sound Class can output to a specific Submix, providing incredible flexibility. The ‘Master Submix’ is the default output for all audio, but you can create custom Submixes for various purposes:

Using Submixes, you can create a professional-grade mixing console within Unreal Engine, allowing you to fine-tune every aspect of your automotive soundscape. This attention to detail ensures that the audio quality matches the visual fidelity of the stunning 3D car models you integrate into your projects.

Static sound effects, while important, can only go so far. The true power of Unreal Engine’s audio system shines when sounds dynamically react to player input, vehicle physics, and in-game events. Blueprint Visual Scripting provides an intuitive yet powerful way to integrate complex audio logic, allowing your 3D car models to not just look alive, but to sound genuinely responsive and interactive. From engine RPM changes to physics-driven crashes, Blueprint is your bridge between visual events and auditory feedback.

For immersive automotive experiences, every action should have a corresponding auditory response. This level of interactivity elevates a simple visualization into a truly engaging simulation, crucial for games, training, or interactive configurators.

The engine sound is arguably the most crucial auditory element for a car. With Blueprint, you can create highly dynamic engine sounds that accurately reflect the vehicle’s state. This typically involves:

A typical Blueprint setup would involve a ‘Tick’ event that continuously reads the vehicle’s RPM and updates the parameters of the playing MetaSound or Sound Cue. Gear change events would trigger one-shot sounds and potentially adjust the engine sound logic temporarily.

Beyond the engine, a car interacts with its environment in myriad ways that should be audibly represented. Blueprint allows you to connect these physical interactions to sound playback:

By connecting these physics data streams to appropriate audio assets via Blueprint, your 3D car models will not only look like they are interacting with the world but will also sound like it, creating a truly believable and immersive automotive simulation.

In real-time rendering, every millisecond and every megabyte counts. While visual fidelity often takes the spotlight (with features like Nanite handling millions of polygons and Lumen delivering global illumination), audio also contributes to the performance budget. High-quality spatial audio and complex mixing can consume CPU, memory, and even disk I/O. For high-fidelity automotive visualization and interactive applications, especially for AR/VR, optimizing your audio system is as critical as optimizing your visuals. An experience can be quickly ruined by audio glitches, dropouts, or an overall sluggish feel.

The goal is to deliver rich, immersive sound without compromising the smooth frame rate and responsiveness of your Unreal Engine project. This requires a balanced approach to asset management, engine configuration, and vigilant profiling.

One of the most common performance bottlenecks in audio is exceeding the available ‘voices’. A voice refers to an individual sound playing concurrently. Unreal Engine has a default maximum number of active voices, and exceeding this limit can cause sounds to drop out or not play. For automotive scenes, with potentially multiple cars, environmental sounds, UI feedback, and music, this limit can be reached quickly. To manage this:

Project Settings > Audio > General > Max Cound Count). While increasing it can prevent dropouts, it also increases CPU usage. Find a balance.Balancing these settings ensures that your most important automotive sounds are always heard, while less critical sounds are managed efficiently without impacting performance.

Identifying audio performance issues requires dedicated profiling tools. Unreal Engine offers several ways to inspect your audio system:

'Audio.Debug 1' or the ‘Developer Tools’ menu, the Audio Debugger overlays real-time information about active voices, their locations, attenuation, and which Sound Classes and Submixes are active. This is invaluable for pinpointing sounds that are unexpectedly playing or consuming too many voices.'Stat Audio' provide a quick overview of active voices, memory usage, and CPU time spent on audio processes directly in the viewport.By regularly profiling and debugging your audio, you can maintain optimal performance, ensuring that the high-quality 3D car models you integrate into your projects are accompanied by a flawlessly executed soundscape, enhancing the overall realism and user experience.

Unreal Engine’s audio system extends far beyond basic sound playback, offering powerful capabilities for a variety of advanced applications common in automotive visualization and real-time experiences. Whether you’re crafting a breathtaking cinematic trailer showcasing a new car model, developing an interactive AR/VR configurator, or contributing to a virtual production pipeline, the integration of sophisticated audio tools is paramount for delivering truly impactful and immersive results.

The flexibility of Unreal Engine allows artists and developers to push the boundaries of what’s possible, ensuring that every auditory detail enhances the visual spectacle, from the smallest nuance to the grandest soundscape.

When it comes to creating stunning cinematic trailers, product reveals, or virtual production sequences for your 3D car models, Sequencer is Unreal Engine’s powerful non-linear editor. While often lauded for its animation and camera control, Sequencer also provides a comprehensive suite of tools for orchestrating cinematic audio with precision and artistic flair. Integrating audio into Sequencer allows you to:

For example, you could animate the pitch of an engine sound over time to perfectly match a dramatic acceleration shot, or layer multiple impact sounds precisely with a slow-motion crash sequence, ensuring every visual cue is reinforced by a corresponding auditory event. The detailed 3D car models from 88cars3d.com deserve a cinematic treatment that is equally refined on the auditory front.

Augmented Reality (AR) and Virtual Reality (VR) experiences for automotive visualization demand an even higher level of audio immersion. The spatial accuracy of sound becomes paramount to maintain the illusion of presence and enhance user engagement. Unreal Engine offers specific features and best practices for AR/VR audio:

By meticulously crafting your audio for AR/VR, you can transform a visual car model into a truly tangible, present object, enhancing the sense of realism and user connection. Leveraging these advanced audio techniques ensures that your Unreal Engine projects, whether showcasing the intricate details of a car from 88cars3d.com in a cinematic sequence or experiencing it firsthand in VR, deliver an unparalleled sensory experience.

The journey through Unreal Engine’s audio system reveals a profound truth: sound is not merely an add-on but a fundamental pillar of immersion. For automotive visualization, game development, and real-time rendering, mastering spatial audio and sophisticated mixing techniques is as crucial as perfecting your PBR materials or optimizing high-poly geometry with Nanite. By meticulously crafting dynamic engine sounds using MetaSounds, leveraging accurate attenuation and occlusion, and expertly balancing your soundscape through Sound Classes and Submixes, you elevate your projects from visually stunning to truly unforgettable.

We’ve explored how Blueprint visual scripting breathes life into vehicle physics, connecting every acceleration, gear change, and collision to a responsive auditory cue. We’ve also delved into vital optimization strategies, ensuring that your rich audio experiences run smoothly on diverse platforms, from high-end virtual production stages to memory-constrained AR/VR devices. Finally, understanding how to orchestrate cinematic audio with Sequencer and tailor sounds for immersive AR/VR experiences empowers you to tell more compelling stories and create more engaging interactive products.

The detailed 3D car models available on platforms like 88cars3d.com provide an exceptional visual foundation. By investing equal effort into their sonic counterparts, you create a cohesive, believable, and utterly captivating experience. Embrace the power of sound in Unreal Engine, and let your automotive creations resonate with unparalleled realism and depth. Start experimenting with these techniques today, and listen to your projects come alive.

Meta Description:

Texture: Yes

Material: Yes

Download the Cadillac CTS-V Coupe 3D Model featuring detailed exterior styling and realistic interior structure. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, AR VR, and game development.

Price: $13.9

Texture: Yes

Material: Yes

Download the Cadillac Fleetwood Brougham 3D Model featuring its iconic classic luxury design and detailed exterior and interior. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $10.79

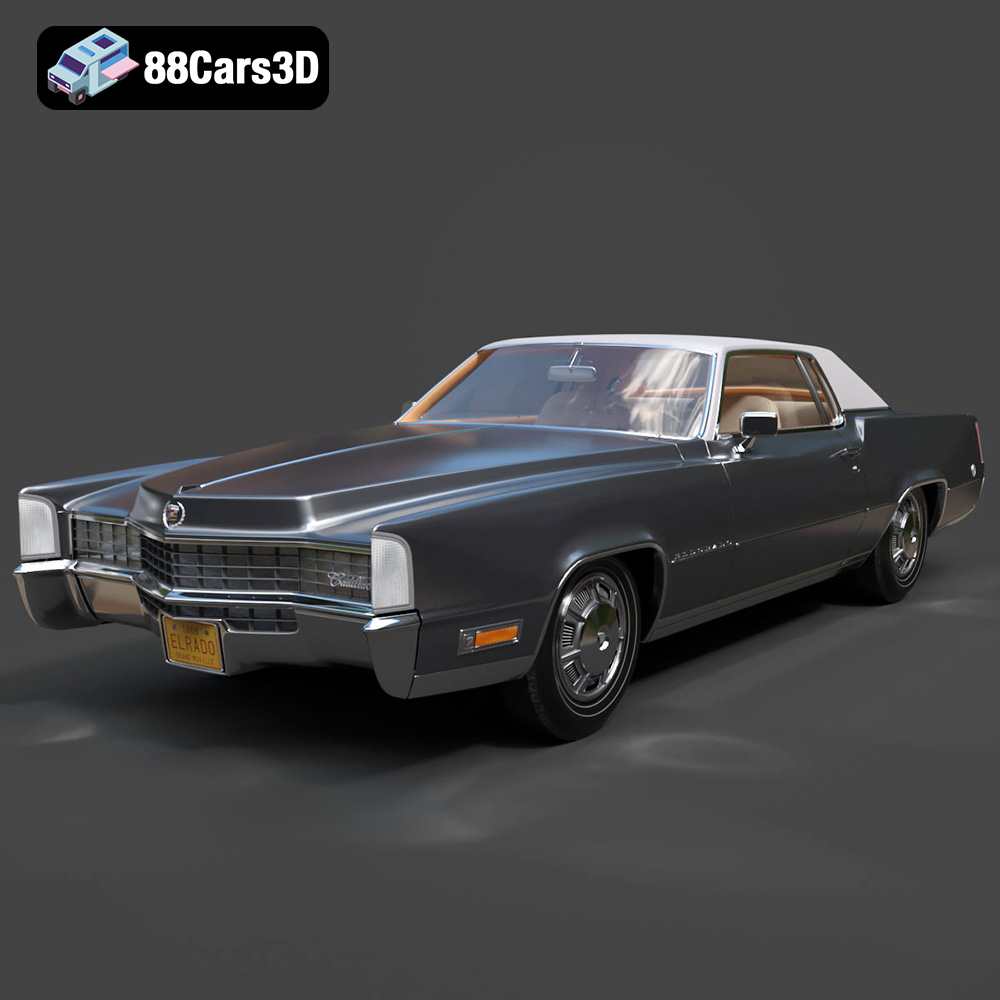

Texture: Yes

Material: Yes

Download the Cadillac Eldorado 1968 3D Model featuring its iconic elongated body, distinctive chrome accents, and luxurious interior. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $20.79

Texture: Yes

Material: Yes

Download the Cadillac CTS SW 2010 3D Model featuring a detailed exterior, functional interior elements, and realistic materials. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $10.79

Texture: Yes

Material: Yes

Download the Cadillac Fleetwood Brougham 1985 3D Model featuring its iconic classic luxury design and detailed craftsmanship. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $10.79

Texture: Yes

Material: Yes

Download the Cadillac Eldorado 1978 3D Model featuring accurately modeled exterior, detailed interior, and period-correct aesthetics. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $10.79

Texture: Yes

Material: Yes

Download the Cadillac STS-005 3D Model featuring a detailed exterior and interior. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $22.79

Texture: Yes

Material: Yes

Download the Cadillac Eldorado Convertible (1959) 3D Model featuring iconic fins, luxurious chrome details, and a classic vintage design. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $20.79

Texture: Yes

Material: Yes

Download the Cadillac DTS-005 3D Model featuring its iconic luxury design, detailed interior, and realistic exterior. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $10.79

Texture: Yes

Material: Yes

Download the Buick LeSabre 1998 3D Model featuring a classic American full-size sedan design. Includes .blend, .fbx, .obj, .glb, .stl, .ply, .unreal, and .max formats for rendering, simulation, and game development.

Price: $10.79